I have open-sourced a website directory system on GitHub that can automatically generate website information — AigoTools. By simply inputting the address of the websites to be included, the system can automatically take screenshots of the websites, crawl the website information, and process this information through a llm model. With this system, you can easily deploy a navigation site with 10,000+ URLs.

GitHub: https://github.com/someu/aigotools

Core Features

Site Management

Automatic Site Information Collection

User Management (Clerk)

Internationalization

Dark/Light Theme Switching

SEO Optimization

Multiple Image Storage Solutions

Open Source UI Design Drafts

Ideas

The project is developed based on Next.js and NestJS. The navigation site main body and the crawling service are separated. If you don’t need the crawling service, you can directly deploy the main body of the navigation site on Vercel, making it very convenient and fast.

Website information processing based on large models. This project uses Jina to read website information and OpenAI to summarize and automatically categorize website information. There are prompts for information summarization and categorization in the project, using the GPT-4 model to summarize website information.

The crawling service is based on Bull.js for queue management, easily handling crawling tasks for tens of thousands of navigation sites.

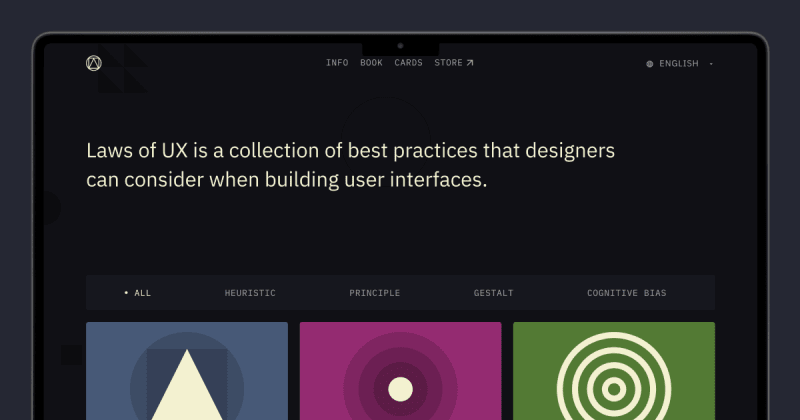

The project’s UI is simple, and the author has also open-sourced the design drafts. We can make adjustments and develop our own site based on these design drafts.

Project Links

GitHub: https://github.com/someu/aigotools

Demo Site: https://www.aigotools.com/